Scientists develop a groundbreaking robotic system that can read recipes and cook them in real-time using AI-powered food recognition! 🍳

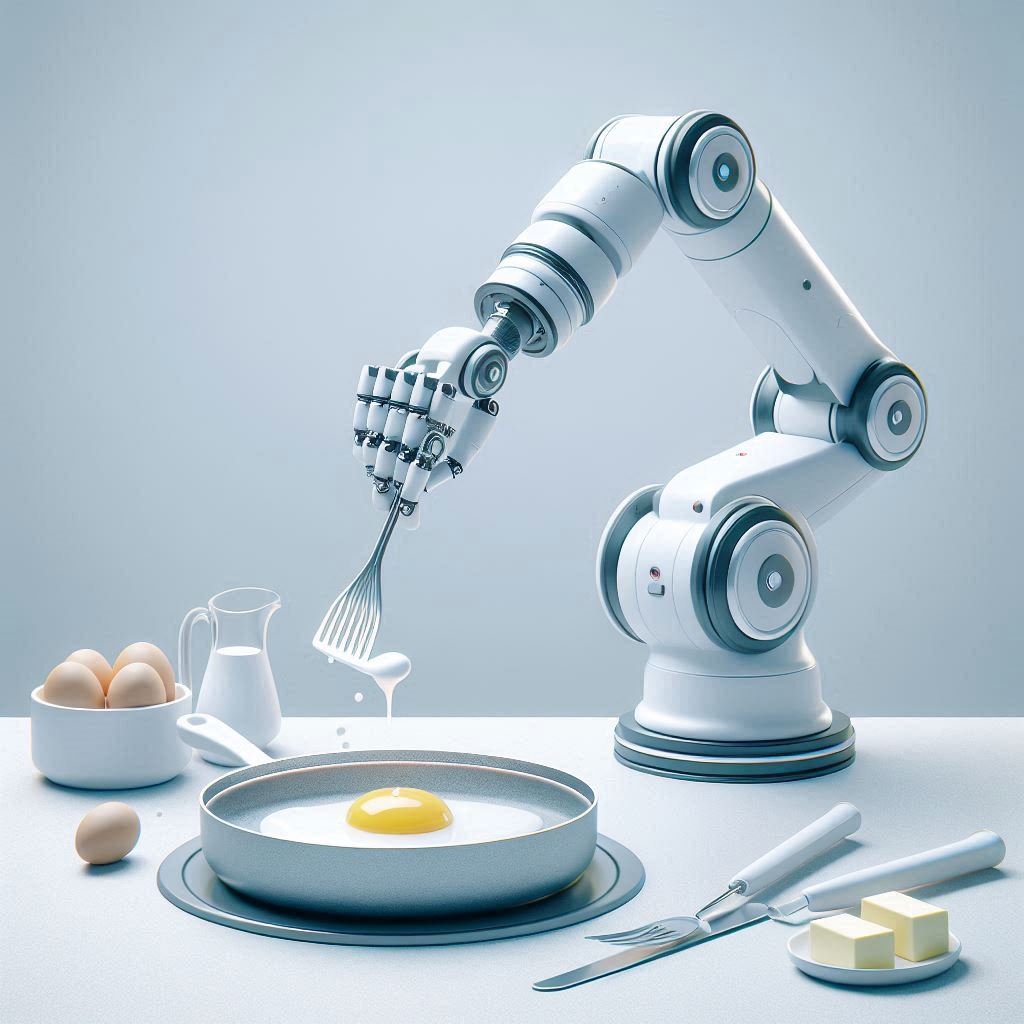

Picture this: You hand a robot a recipe for sunny-side up eggs, and it not only understands the instructions but actually cooks them perfectly! 🍳 This isn't science fiction anymore - researchers have developed a revolutionary robotic cooking system that combines the power of Large Language Models (LLMs), classical planning techniques, and Vision-Language Models (VLMs) to make this a reality.

Their dual-armed wheeled robot, PR2, is like a master chef in the making. It doesn't just blindly follow instructions - it thinks ahead! 🤔 When you tell it to "melt butter in a pan," it knows it needs to:

But here's where it gets really interesting - the robot can actually see and understand what's happening to the food! 👀 Using advanced vision AI, it can recognize when butter has melted or when eggs are perfectly cooked. This means it can adjust its actions in real-time, just like a human chef would.

During testing, the robot successfully tackled various egg dishes, from sunny-side up to scrambled eggs, showcasing its ability to handle different cooking techniques. It even managed to prepare dishes it had never "seen" before, adapting its knowledge to new recipes! 🔄

The implications are huge - imagine restaurants with robot sous chefs, or assistance for people with disabilities in the kitchen. While we're not yet at the point of replacing human chefs entirely (and who would want to?), this technology opens up exciting possibilities for the future of cooking! 🚀

Source: Naoaki Kanazawa, Kento Kawaharazuka, Yoshiki Obinata, Kei Okada, Masayuki Inaba. Real-World Cooking Robot System from Recipes Based on Food State Recognition Using Foundation Models and PDDL. https://doi.org/10.48550/arXiv.2410.02874

From: The University of Tokyo.